While GPT-4 is one of the most powerful AI models available today, it’s far from flawless. As advanced as it seems, there are still crucial limitations that shape how it can—and can’t—be used in real-world scenarios. Understanding these boundaries isn’t just a technical concern—it’s essential for developers, businesses, educators, and anyone building on top of this technology.

Grasping such underlying limitations of GPT-4 is critical, particularly for sophisticated tech-savvy developers, researchers, and policymakers that are dependent on LLMs in content generation, automation, research, or even innovation.

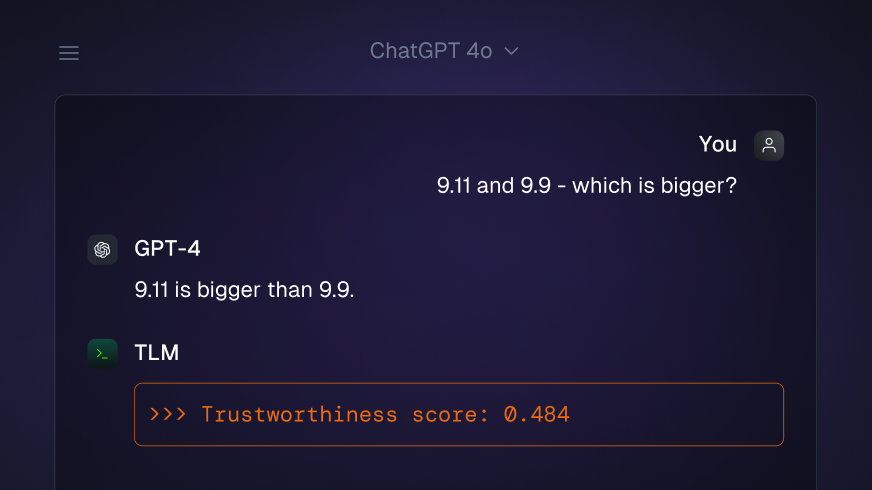

GPT-4 Continues to Hallucinate – And It’s Not Improving Quickly

GPT-4 is significantly better than GPT-3.5 in fact-checking—but even so, GPT-4 “hallucinates” facts. It is capable of stating confidently incorrect or fabricated information.

Example: Ask it for the latest paper by a lesser-known scientist, and it may invent a publication or misattribute authorship. These errors are dangerous in fields like medicine, finance, and law, where accuracy is non-negotiable.

No Real-Time Data Access Without Plugins

Not being internet-connected—even if it’s sophisticated—GPT-4 is not internet-connected unless supplemented with plugins such as the Browsing Plugin or combined with real-time APIs.

That is:

- It isn’t aware of events after the last training cut-off that occurred for most versions (e.g., April 2023).

- Stock prices, latest news, or recently released research? It just cannot assist natively.

This limitation forces developers to create their own retrieval-augmented systems or integrate third-party tools for dynamic data.

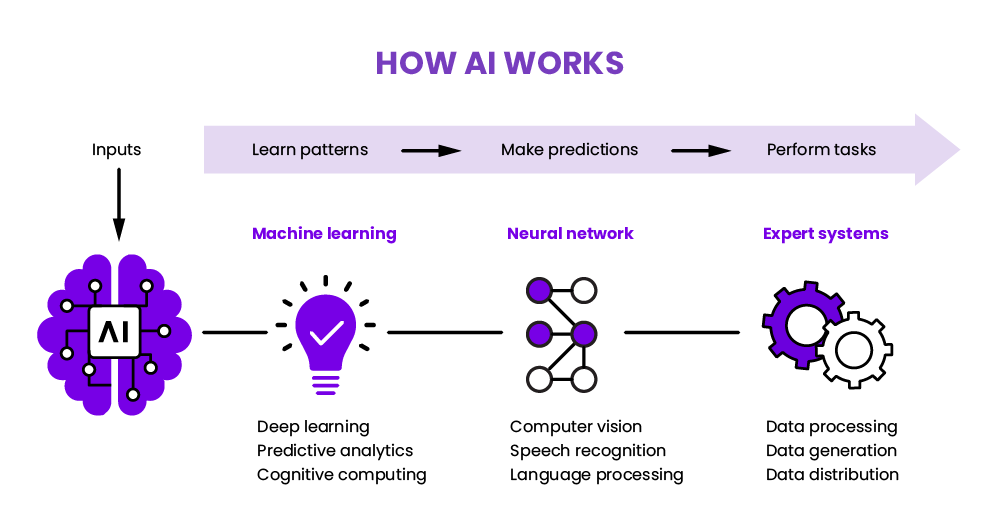

It Doesn’t Understand, It Predicts

GPT-4 does not reason or infer like a human being—it guesses the most probable token in the sequence. Its smarts are statistical, not cognitive.

What does that mean?

- It doesn’t “know” what it’s saying.

- It can give contradictory responses depending on phrasing.

- It lacks true comprehension, intent, and context retention across long, dynamic conversations.

Weak Reasoning in Intricate Work

GPT-4 is weak in multi-step reasoning, sophisticated math, and symbolic manipulation.

For example:

- It can’t consistently solve complex proofs.

- It does not work in multi-layer reasoning unless specially step-by-step guided.

- It frequently offers false but believable-looking responses in scientific scenarios.

This is a major disadvantage for software debugging, physics, and engineering applications.

Not Much Creativity Beyond Patterns

GPT-4 appears creative—but it’s imitative, not truly imaginative. It stitches together patterns from its training data.

That’s why:

- GPT-4’s poems, stories, or artworks tend to be repetitive.

- It lacks depth of emotion or cultural nuance.

- It does not surprise, provoke, or subvert expectations like a human artist is able to.

Even when composing music or programming game scenarios, its products are likely to become formulaic unless given very precise and innovative instructions.

There is No Real Visual Understanding

even GPT-4’s vision input abilities (in its Vision model) are limited:

- It can’t interpret sarcasm in memes.

- It frequently misidentifies structures in composite images.

- It does not have spatial awareness and nuanced contextual recognition in imagery.

In applications such as medical imaging, security, or building design—this is a deal-breaker.

Why These Limits Matter

Understanding GPT-4’s inherent limitations is not only academic but also pivotal in building safe, effective, and reliable AI systems.

Neglecting the flaws results in:

- Misinformation on scale

- Subpar judgment

- Overreliance on flawed automation

Developers and AI product builders must design around these weaknesses—whether by fine-tuning, adding human-in-the-loop systems, or supplementing GPT-4 with domain-specific models.

GPT-4 is strong, but not all-knowing. Understanding its limitations makes us able to use it more responsibly—and develop smarter, safer products.